Five Things to Consider to Minimize Data Center Downtime

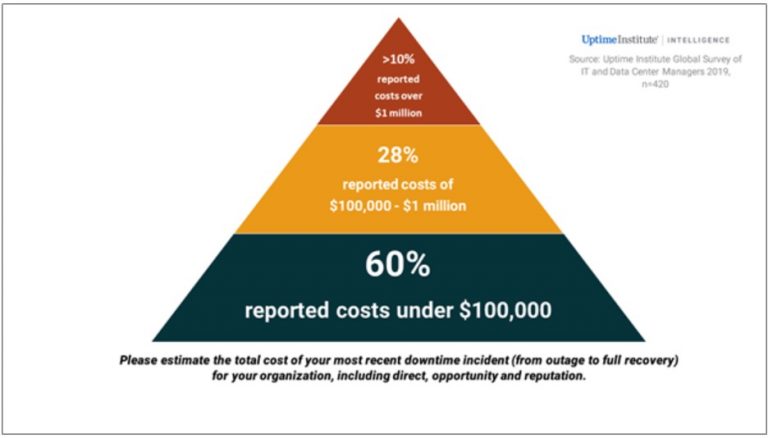

Data center outages are costly. They always incur financial losses whenever there are downtimes, regardless of the reasons behind the downtime. In addition to financial repercussions, the data center company can also suffer data loss, a damaged reputation, and lost opportunities. it is imperative therefore to minimize data center downtime.

While it’s easier to determine the causes of downtime, preventing them in the future with the right plans and procedures is where most companies fall flat. Here are some of the best strategies to combat data center outages:

1. Minimize Management Errors

Management errors remain as the number one reason behind downtimes. In fact, in 2019, the Uptime Institute conducted a survey on data center outages and found that 60% of companies believed that their data center downtime could have been prevented with better management. What’s more surprising is that when outage damages increased to more than $1 million, 74% of the respondents believed that management was at fault. For those data centers that experienced a loss of $40 million, 50% still blamed management errors.

Institutions that rely on people are limited by the probability of human errors. The Uptime Institute survey found that outages exist not mainly because of human worker errors, but because of management errors that lead to more downtime. Some of management’s pitfalls are the following: lack of investments in training, inadequate enforcement of policies, outdated procedures, and fewer certifications, training, and hiring of more qualified personnel.

Before a facility encounters downtime, it’s important for them to take note of these potential management errors. Many organizations may sometimes think of an error as a one-time event, but it’s valuable to turn human error examples into data points. By noting when and what kind of mistakes were made, companies can then understand the bigger picture, that clearly shows where lie vulnerabilities and gaps in their operational practices.

2. Prevent Cyber Attacks

Cyberattacks cause larger headlines than other downtime failures. Some of the cyber threats that plague data centers are ransomware infection and attacks via distributed denial of service (DDoS). As more and more companies and organizations are going online, cloud services have never been under more threat than recently.

Connectivity options provided by a carrier-neutral data collocation make them the best fit when confronting a DDoS attack. To circumvent a DDoS attack, it pays to have blended ISP connections, as they can provide redundancy without compromising network performance. It also helps to incorporate data analytics in data centers that not only monitor data center operations, but also identify suspicious patterns in traffic, and detect cyberattack masking as unusual network activity. By leveraging these options, collocation facilities can detect and manage threats even before they wreak havoc to a data center.

Cyber protection is all the more important with the increasing use of Internet of Things (IoT) devices and as more companies buy cloud services. Companies need to reassess their cybersecurity for the sake of increasing customers and virtual users.

3. Understand Equipment’s Health

Data centers run non-stop, which means their operations always carry the risk of equipment failure. Servers are not the only equipment that is subject to breakdown, certain technologies such as cooling solutions, monitoring devices, electricity generators, and other power sources are tied to the same risks.

According to the research center, Ponemon Institute, generator failure causes 10 percent of data center outages. It’s important to perform maintenance and to test generators regularly to ensure that the facility has sufficient power, in case of a power outage. In the same study, IT equipment failures such as servers and computer problems account for four percent of data center downtime. A good solution would be to monitor the age, structure, and performance of equipment. Management needs to mitigate the risk of old equipment in a server room. Aging equipment will eventually fail, so it should be refreshed as soon as possible.

A data company can assess equipment best when it taps the help of advanced analytics and automated monitoring systems, aided with machine learning. For example, Data Center Infrastructure Management (DCIM) tools can help data hubs assess and examine the status of their equipment along with their collocated asset infrastructure.

Although it’s impossible to predict failures, some sophisticated algorithms can continually monitor the performance of the equipment. The programs may not determine equipment lifespan, but it can anticipate when the hardware nears a break down. Having all this information can help a company identify the outdated equipment, and easily replace it, without putting some critical process offline.

In short, facilities that have redundancy plans, or backup and emergency solutions, can easily manage equipment failures without compromising data center performance.

4. Be Aware of Software’s Status

Some software related problems are not as common as hardware failures. Yet, they also present the same threat to efficient data centers. Outdated software opens an array of security issues. For instance, when an operating system is also poorly tested, it can corrupt critical mission applications. Another thing to consider is software bugs, as they also can damage the systems.

Continuous updating and monitoring of vital systems are important so that software applications can function smoothly. The company must consider automated testing where they place the software systems in a variety of simulations. In this way, management knows the readiness of software, assess possible exposure to problems, and explore possible solutions. Facilities should keep in mind software compatibility and performance, so that data centers can respond to circumstances challenging their software capacity.

5. Prepare for Natural Disasters

Natural disasters cannot be controlled but some impacts can well be prevented. When companies establish their data centers, most have already considered the location’s geographical and environmental situations. Some companies may neglect disaster plans when no disasters have happened in the recent past.

One way of prevention is to locate the facility in safe places, with a small percentage of disaster occurrences in the area. All types of environmental threats should be considered: flood, earthquake, tornadoes, wildfires, etc.

Some companies, however, may still choose to locate their data centers in disaster-stricken areas because they are near the company or the market. If so, then companies should develop a disaster preparedness plan and a disaster recovery plan, where well-trained and certified personnel are designated to handle situations. The data hub must always have extra accommodations, spare generators, food, and other necessities. Lastly, all personnel must practice redundancy operations, disaster drills, and safety measures, even in absence of real danger.

Taking the necessary measures to avoid data center outages should be a priority among data center companies. The good news is that a lot of tools and solutions are now available for these companies to adopt in their business process. These can help them reinforce their infrastructure so that despite the presence of problems, no downtime will occur so they can keep their systems up and running, serving clients efficiently.

AKCP provides monitoring solutions for the data center that assist in minimizing downtime.

- About the Author

- Latest Posts

For over two decades at AKCP, I have been focused on a single mission: bringing complete visibility, security, and efficiency to the world’s critical infrastructure.

I believe that in the modern data center, AI is only as good as the data it receives. My goal is to ensure facilities have the precise sensor facts needed to control AI opinions, ultimately reducing PUE, releasing stranded capacity, and ensuring maximum uptime.