Datacenter’s serve as a bridge in the digital world. It keeps it powered to meet the demand for information. The facility consists of high-density servers that store and process a large amount of data. With the daily performance, it entails large power consumption and heat generation. This results in an increase in temperature within the facility. Large data centers consume energy enough to power a city with 1 million populations. Cooling systems are installed to remove the excess heat. However, if the cooling is inadequate, it can cause overheating in the data center.

Overheating in the data center can cause equipment failure leading to downtime. This will impact the whole business operation. For colocation data centers, it can ruin the relationship of the owner and the corporate clients. The consequences of overheating cost millions of dollars or even the business continuity. Therefore, it is important to oversee the optimum temperature to prevent overheating in the data center.

Overheating in Microsoft: A Case

A row of servers in Microsoft’s data center overheated as they were trying to update their firmware. As a result, its cloud services experienced interruption for 16 hours. Both Outlook and Hotmail are offline during this period. According to a report, previous updates were done successfully. However, that update went wrong out of the blue. The failure was unexpected, and the temperature increased at full tilt. By the time it was mitigated, the heat already affected the data center. Bringing the operations back online required both infrastructure software and human intervention to get the core infrastructure into a physical region of its data center.

Microsoft operates its data center with lower temperatures to save energy. However, doing this allows inlet temperature to spike up too quickly. With this case, the strategy left Microsoft a little time to recover from the cooling failure. The risk of overheating in the data center is clearly evident, especially those high-density ones such as Microsoft’s.

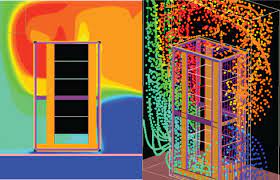

What are Hotspots?

Photo Credit: www.upsite.com

An increase in rack power densities makes a data center prone to hotspots. A hotspot is a location in the data center where the intake temperature is higher. It usually happens at the top of the rack as heat naturally rises. Although it is a practice to set the cooling system to maximum, hotspots still develop. The reason is that the flow of cold air is disrupted as it goes to where it is needed. As a result, cooling capacity appears to be insufficient with some necessary areas. If hotspots are not identified earlier, it will harm the equipment. Administrators need to take action to eliminate it as early as possible.

Ways of Preventing Overheating in Data Center

High temperature is the nature of the data center. Heat is inevitable but overheating is something that can stave off. By following the practices below, administrators can prevent overheating that can lead to catastrophe.

Manual Temperature Checks

Automatic temperature monitoring is one of the most game-changing products of innovation in the data center industry. Although it guarantees fast and reliable temperature data, having a manual check is likewise important. Performing it regularly will determine if the sensors are working properly by detecting the right temperature. It may not be as easier as using sensors, but it will help administrators ensure that they are not overlooking any potential threat. This is also ideal for the smaller data centers with an insufficient budget for automatic monitoring.

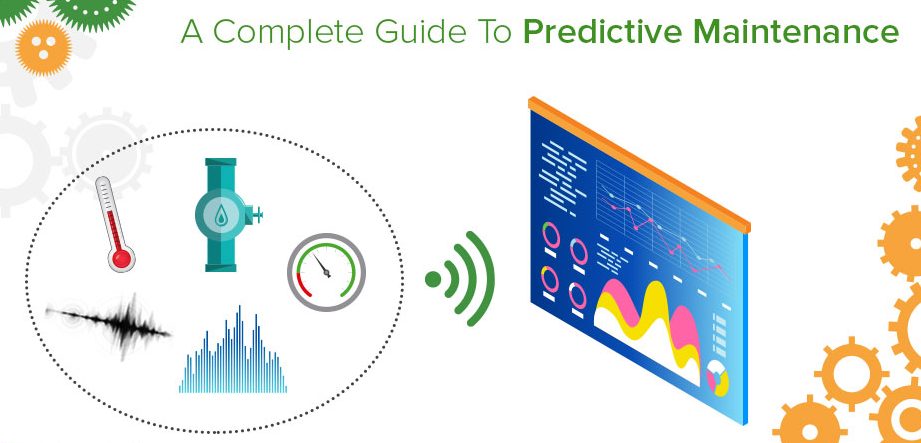

Regular Preventive Maintenance

Photo Credit: limblecmms.com

The HVAC system is of less priority in data centers than the servers. However, keeping the equipment in the best condition is not enough to run a reliable data center. The support component of the facility, including the HVAC systems, is also critical. A fact is that the performance of the server is based on the performance of the HVAC system. Thus, one of the most common causes of overheating in the data center is a poorly maintained air-conditioner.

In maintaining the HVAC system, administrators should focus on the necessary components such as:

- Air filters

- Blower drive systems

- Compressors

- Facility fluid and piping,

- Evaporator coils.

Completing this checklist will optimize the performance and prolong the lifespan of the units. If the company is incapable of performing this task, working with the service providers is a recommendation.

Relocating Problem Loads

It is important to identify the problem load before it compensates with the overheating in the data center. It will then be moved into a lower-density rack. Among the practices done by the Schneider Electric – Data Center Science Center, this one is concluded to be the most effective. Spreading the exceeding load to meet the cooling capability should not leave a space in the rack.

Effective Cooling System

A cooling system has always been the top priority of data center administrators. According to a 2015 survey, as much as 24% of a data center’s budget can go into cooling. The number of servers simultaneously exhausting heat within the facility is more critical. Just having a cooling system and setting it to maximum is insufficient to prevent overheating in the data center. To keep it operating with the utmost efficiency, an effective cooling system is indispensable.

As air flows, it is important to ensure that hot air and cold air do not mix. According to Mission Critical, this practice “is one of the most promising energy-efficiency measures available to new and legacy data centers today” (Mission Critical, Fall 2007). The cooling system needs to take the cold air to the strategic locations to keep servers from having high-temperature intake. Running the data center with 64.4 to 80.6F as recommended by the ASHRAE promises superior performance and cost-efficiency.

Hot and Cold Air Containment

Hot and cold air containment is used to optimize the cooling system. In 2016, 80% of all the data centers had implemented this as part of their effective cooling strategy. These two can prevent hot and cold air mixing, thus mitigating the risk of overheating in the data center. However, every data center is distinct from one another. Therefore, it’s best to understand the differences between the two approaches. In that way, administrators will know which will work better to achieve the most effective cooling.

-

Hot Air Containment

A hot-aisle containment system (HACS) encloses the path of hot air by putting a physical barrier. It collects the hot air generated by the equipment then guides it to the air-condition unit return. As it isolates the hot air, it allows a more cooling capacity for the data center. The research by Schneider Electric’s Data Center Science Center shows that this approach contributed 43% cooling system energy savings over cold aisle containment.

-

Cold Air Containment

A cold-aisle containment system (CACS) isolates the cold aisle. The doors at the end of the aisle and partitions on the ceiling serve as the barrier to separate the hot and cold air. To implement this, the racks have to be arranged on the hot/cold aisle layout that IBM introduces. This approach is easier and practical to implement yet less effective.

Monitoring Using Temperature Sensors

Rising temperature, if not detected, will lead to the data center’s crashing halt. To prevent overheating, it is essential to identify the temperature pattern at a specific interval. A spiking temperature is a portent that there’s an issue. It may be a cooling failure, lost power, or air recirculation. Monitoring using temperature sensors helps discover these issues earlier, leaving more time to take action.

Proper installation of temperature sensors will provide accurate data valuable in tracking temperature changes. If there’s a consequential increase in temperature, it will send a notification to the administrators. Multiple sensors are placed on walls, ceiling, or at the top, middle, and bottom of racks to measure the ambient and rack temperature. It is also placed near the equipment intakes as these locations are susceptible to hotspots.

AKCP Wireless Temperature Sensor

With the amount of money at stake in running a data center, investing with the sensors is wise. Wireless temperature sensors offered by AKCP will help you run your data center confident from the risk of overheating. Administrators can relish more advantages such as data accuracy, non-cabling, accessibility, and cost-efficiency with its wireless capability.

It has a built-in calibration check, in which a warning is sent if the sensor requires calibration. As it is known, proper calibration yields accurate measurements, which in turn makes good control of the process possible. Thus with AKCP wireless temperature sensor, the data center can run with utmost efficiency and reliability.

Sensor Features

- 4x AA Battery powered, with 10-year life*

- USB 5VDC external power.

- 12VDC external power.

- Custom sensor cable length up to 15ft to position sensor in an optimal location.

- NIST2 dual-sensor calibration integrity check.

- NIST3 triple sensor calibration integrity checks with failover.

Reference Links:

https://www.datacenterknowledge.com/archives/2013/03/14/heat-spike-in-data-center-caused-hotmail-outage

https://www.datacenterdynamics.com/en/news/overheating-brings-down-microsoft-data-center/

https://download.schneider-electric.com/files?p_Doc_Ref=SPD_VAVR-9GNNGR_EN

http://www.facilitiesnet.com/whitepapers/pdfs/APC_011112.pdf

https://www.ambientedge.com/kingman-heating-and-air-conditioning-repair-and-service-experts/what-do-i-do-if-my-data-center-is-overheating/