The technology sector is full of jargon. But are terms like human augmentation, mesh networks, blockchain, intelligent assistants, and similar terms actually understood by the average person outside of the tech industry? It makes sense that entire dictionaries are devoted to technology. This article, which highlights over 20 technologies that are shaping digital business, seeks to define the terminology that data center experts are prone to throw around.

Given that the internet of things (IoT) is a key driver of the digital transformation of many enterprises, now would be a good moment to examine the concept of digital twins, which is frequently used in conjunction with IoT.

What Are Digital Twins?

The definition of a digital twin is straightforward: it is a truly digital representation, or “twin,” of an actual object that is typically stored on a cloud platform. However, it’s more than just a 3D model, scanned image, or blueprint of the object. A virtual depiction of a real object that includes all the digitized components and dynamics of how it functions and operates as well as how it changes over time is called a “digital twin.” With the help of digital twins, the gap between the physical and digital worlds can be filled, allowing for remote monitoring and testing of physical objects. Digital twins are expediting the creation of products and processes, improving performance, and enabling predictive maintenance, according to Deloitte.

By 2023, Deloitte projects that the global market for digital twins will have grown by 38% annually and will total $16 billion. Additionally, it is anticipated that by 2020, the use of digital twin-enabling technologies like IoT and machine intelligence would nearly double.

Types Of Digital Twins

Photo Credit: www.opal-rt.com/digital-twins/

Although there are many different ways to classify digital twins, including by use case, hierarchy, or utility, the framework provided below offers a decent generic classification that is suitable for the majority of applications.

- Product Digital Twin

Realistic digital models can assist product designers in fast prototyping new concepts, working with user communities, and testing various what-if situations to confirm how the product would function in the real world.

- Operational Digital Twin

Organizations can use a virtual model of a process to test how well a process or process change will function before implementing it and, after implementation, to spot inefficiencies and find solutions. Supply chains, healthcare, smart cities, and other areas are examples of applications outside of manufacturing facilities.

- Performance Digital Twin

When associated with a person, place, or object, digital twins can collect real-time data on usage, performance, operational conditions, and more. Organizations can forecast when maintenance is necessary, increase efficiency, enhance digital twin models, solve issues more quickly, and make more educated decisions by combining this data with AI and ML algorithms.

- Remote Digital Twin

Considering that digital twins by definition do not require proximity, it is questionable if this belongs in a separate category. But we’ll still include everything, with human actor engagement serving as the key differentiator. Examples include a surgeon operating remotely or an engineer using a digital twin model to make repairs.

How Data Center Digital Twin Work

A physical or mathematical model of an asset is often the starting point of a digital twin. After the initial digital twin is created, it uses information from sensors placed on the asset to monitor operating conditions, real-time performance, and changes over time. This information is transmitted through a cloud-based system and is then examined. The digital twin can learn and keep track of changes over time thanks to AI and ML algorithms, effectively turning it into a live digital representation of the asset. It may also make use of data from a range of different sources, including data from a larger ecosystem, industry and domain expertise, similar assets, and human experts. A concrete example will help to clarify:

A patient’s heart is scanned, her heart is photographed, and sensors from her pacemaker are used to generate a 3D digital twin of her heart. The digital twin’s AI/ML algorithms customize the model to reflect the traits of her heart and body. Due to the complexity of her surgery, the digital twin will allow her doctor to prepare by planning and performing a mock procedure. He can also employ a less intrusive treatment thanks to the increased level of precision. As the surgeon performs the procedure from 3,000 miles away, the digital twin gives him real-time 3D knowledge.

Digital Twins And Interconnection

Photo Credit: hellofuture.orange.com

One thing all digital twins have in common is that they change over time as the physical asset they are linked to does, regardless of how complicated they are—from a single product part to a whole city. To effectively track those changes, the asset must be connected to people, clouds, applications, AI and ML systems, and analytics that the digital twin relies on. Deeper insights will be delivered more reliably, effectively, and affordably through direct and secure connections at the digital edge, where digital twin data is being created and consumed. Here are some reasons why connectivity is crucial:

- Where does the information originate? Usually, it will originate from several sources and websites. You won’t be gathering it from one asset over one network in one place. To get data from many sources, you’ll need access to an ecosystem of various networks.

- How is the information processed? In some circumstances, a significant volume of data from sensors and other data sources will need to be transferred to the cloud for analysis by AI and ML algorithms. All of the counterparties participating in the value chain surrounding your data must be able to connect to one another. Typically, to process and store the data, access to several clouds is necessary, necessitating a direct and secure hybrid multi-cloud connectivity.

- When must the information be gathered? Is there a deadline? For long-cycle analytics, some data may be collected less often, while other data may require real-time processing or depend on an action (like I pressed a button, so something should happen). Fast, low-latency interconnection is necessary for real-time data collecting to guarantee that data is transmitted promptly and reliably before its utility has expired.

- Who uses the data, and do any other systems rely on it? This necessitates communication between the twin repository and the data users who must act on the data.

These questions can be resolved with basic physics. Digital twins require a dynamic, global interconnection platform that facilitates real-time interactions between people, things, locations, clouds, and data because there are so many different factors. This entails situating IT infrastructures at the digital edge, or close to where digital twin data is generated, stored, and analyzed.

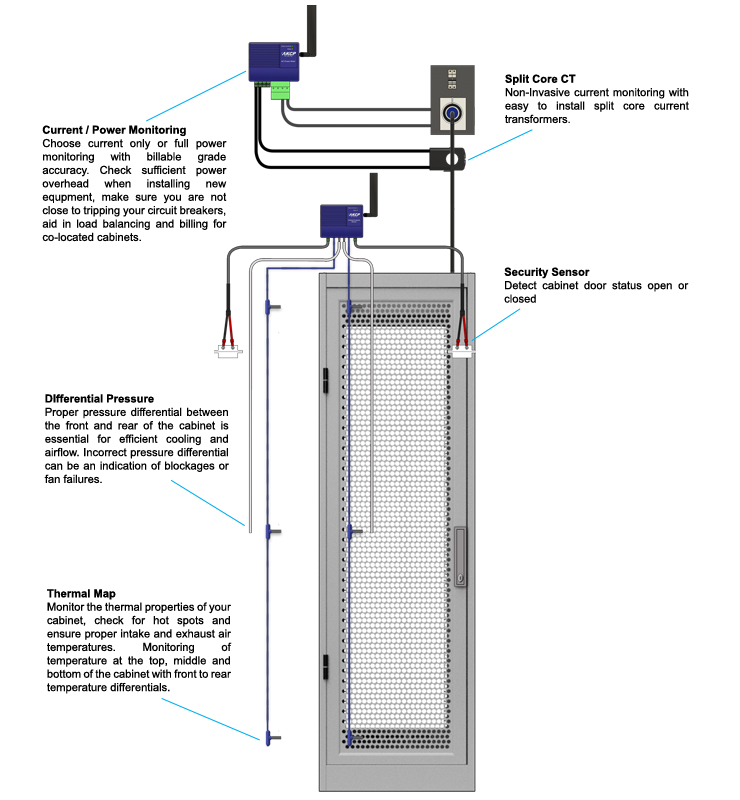

Monitoring With AKCP

AKCP Thermal mapping

The Cabinet Analysis Sensor (CAS) features a cabinet thermal map for detecting hot spots and a differential pressure sensor for analysis of airflow. Monitor up to 16 cabinets from a single IP address with the sensorProbeX+ base units. The Wireless Cabinet Analysis Sensor is also available using our Wireless Tunnel™ Technology.

Differential Temperature (△T)

Cabinet thermal maps consist of 2 strings of 3x Temp and 1x Hum sensor. Monitor the temperature at the front and rear of the cabinet, top, middle, and bottom. The △T value, front to rear temperature differential is calculated and displayed with animated arrows in AKCPro Server cabinet rack map views.

Differential Pressure (△D)

There should always be a positive pressure at the front of the cabinet, to ensure that air from hot and cold aisles is not mixing. Air travels from areas of high pressure to low pressure, it is imperative for efficient cooling to check that there is higher pressure at the front of the cabinet and lower pressure at the rear.

Rack Maps and Containment Views

With an L-DCIM or PC with AKCPro Server installed, dedicated rack maps displaying Cabinet Analysis Sensor data can be configured to give a visual representation of each rack in your data center. If you are running a hot/cold aisle containment, then containment views can also be configured to give a sectional view of your racks and containment aisles.

AKCP Rack+

AKCP Wireless Smart Rack

Build up your data center with a wired or wireless Rack+ system to monitor environmental and power conditions on an individual cabinet level. With AKCP’s system architecture and variety of sensors, this can scale up to a complete Smart Data Center monitoring system. Integration of mainline power monitoring, CRAC systems, and backup power, centrally monitored with AKCP Pro Server.

Make adjustments to your data center environment, and instantly see the effect it has on PUE numbers. Run your data center at its optimum condition for cost savings and server health.

Benefits:

- Live PUE numbers

- Control and monitor CRAC units

- Thermal mapping of cabinets

- Integrated solution

- Control and monitor access to cabinets

- Control and monitor access to rooms

- Monitoring of complete power train

- Free DCIM software

Intelligent Sensors

sensorProbeX+ is compatible with all AKCP Intelligent sensors, making it an extremely versatile and cost-effective monitoring solution. From the data center to remote sites, industrial controls have you covered.

Environmental Monitoring Sensors

Wherever monitoring of environmental conditions is important to your daily operations and health of equipment, deploy sensors to monitor temperature, humidity, airflow, and water leaks.

Security Sensors

RFID Access control swing handle cabinet locks, door contacts, and motion detectors will keep you in tune with the security situation at your sites down to cabinet level. Control who can access and when with RFID access control, centrally managed and monitored through AKCPro Server.

Power Sensors

AC Voltage detection, DC voltmeters, sensor-controlled relays, and power metering can all be done on the SPX+

Specialized Sensors

Tank depth pressure sensors can monitor liquid levels in fuel tanks, oil storage, chemical tanks, and so on. Any tank up to 2 meters in depth can be monitored. Alarms are generated when levels drop to critical levels, making sure you can re-fill with sufficient notice. Other specialized sensors include the key switch override, to disable notifications while maintenance work is being performed, and a programmable LCD.

Reference Links:

https://blog.equinix.com/blog/2019/05/02/how-to-speak-like-a-data-center-geek-digital-twins/

https://www.forbes.com/sites/tomcoughlin/2022/04/28/data-center-sustainability-using-digital-twins-and-seagate-data-center-sustainability/?sh=601cd6397f91

https://www.datacenterdynamics.com/en/opinions/does-your-data-center-need-digital-twin/