Innovative Data Center Cooling For Increasing Demands

Social networking, internet video streaming, smartphone apps, and other technological advances have resulted in a surge in information demand. Since 2010, data center workloads have increased sixfold while energy usage has remained roughly stable thanks to improved energy efficiency. Current energy efficiency methods, on the other hand, are unlikely to meet future energy demand from data-hungry information technologies like AI, 5G, blockchain, self-driving cars, and others. In addition to this expanding requirement, the COVID-19 pandemic further increased the demand for data center services. The unexpected disaster highlighted the importance of data in all parts of our life. Virtual meetings, working from home, VOIP services, require cloud computing and network capacity. The demand for additional data centers is rising as a result of the increased pressure on IT infrastructure, resulting in massive energy usage. We will look in this article at innovative data center cooling for increasing demands.

Cooling and other vital infrastructure maintenance are sometimes put on the back burner because other issues appear to be more pressing. When large companies compete for limited server space in a data center, pressing needs often take priority over routine maintenance and upgrades.

However, as you are aware, it is impossible to overestimate the necessity of keeping your data center equipment cool. Overheating servers are inefficient at best, and potentially dangerous to your facilities and employees at worst.

Let’s take a look at the present state of data center cooling and the most efficient and cost-effective options you can implement.

What Is Data Center Cooling And Its Importance?

The equipment, tools, techniques, and processes that assure an appropriate operating temperature within a data center facility are referred to as data center cooling. Proper data center cooling guarantees that the data center receives the required cooling and ventilation to keep all devices and equipment operating within the desired temperature range.

The equipment, tools, techniques, and processes that assure an appropriate operating temperature within a data center facility are referred to as data center cooling. Proper data center cooling guarantees that the data center receives the required cooling and ventilation to keep all devices and equipment operating within the desired temperature range.

It should go without saying that poorly managed data center cooling can result in excessive heat, putting servers, storage devices, and networking hardware under a lot of strain. This can result in downtime, damage to vital components, and a shorter equipment lifespan, resulting in higher capital expenditures. That’s not everything. From an operational standpoint, inefficient cooling systems can drastically raise power expenditures.

Growing Demand For Cooling Innovation in Data Centers

The demand for data has prompted tech behemoths such as Facebook, Microsoft, Google, and others to construct hyper-scale data centers (HDC) with more than 5,000 servers and 10,000 square feet. The use of high-density computing (HDC) has increased, and when this trend is combined with cooling requirements, energy consumption will climb even more. According to a report by the Aspen Global Change Institute, data centers consume massive amounts of electricity, which is then transformed into heat, necessitating a cooling mechanism. However, the cooling process requires a substantial amount of electricity, followed by storage drives and network servers.

This is why, now more than ever, data centers require new cooling systems. If you feel your cooling system isn’t working as well as it should, it could be due to one of the following factors.

Higher Energy Consumption

Every year, new technological advancements enable computers to perform increasingly complicated functions faster than ever before. Artificial intelligence (AI), the internet of things (IoT), cloud computing, and other server-hosted services are all expanding at a rapid pace.

More powerful, faster servers are being used in data centers to fulfill these changing demands. As a result, even the most cautious projections of data center power consumption in 2018 exceeded 1% of worldwide power consumption. Datacenter power predictions have more than doubled from 200 terawatt-hours (TWh) in 2018 to 500 TWh in 2020, indicating that that percentage has likely risen significantly since then.

The growing rack power density of most data centers helps explain some of this electricity demand. Many data centers can have rack densities of 16kW per rack, despite the fact that the average rack power density is 7kW. With the rise of high-performance computers, even 100kW per rack isn’t out of the question.

The need for electricity is unlikely to decrease anytime soon as large corporations offer more cloud-based services and IoT networks sprout up in homes and businesses around the world. Future projections show a faster-than-expected shift toward data centers dominating global electricity demand.

Limited Spaces

Many data centers strive to cram as much equipment as possible into a compact space. This allows them to house more servers under one roof without having to expand or relocate.

This tightly packed design, on the other hand, reduces ventilation. When every new piece of equipment adds a faster, more powerful system to a rack already crammed with high-power processors running at maximum capacity, ventilation becomes a concern.

This tightly packed design, on the other hand, reduces ventilation. When every new piece of equipment adds a faster, more powerful system to a rack already crammed with high-power processors running at maximum capacity, ventilation becomes a concern.

We’re all aware of the dangers of excessive heat on computer hardware. Under stress, servers, network infrastructure, storage devices, and other equipment in close proximity to a source of intense heat can fail.

Though improving your cooling method may appear costly and unpleasant, the expenses of unplanned downtime, replacement of damaged equipment, and angry customers are nothing compared to the costs of unforeseen downtime, replacement of damaged equipment, and unhappy customers. Even if inadequate cooling has no visible effects on your data center, it’s likely that your hardware will have a far shorter lifespan than it would if it was kept at controlled temperatures. Failure to spend in cooling upfront will surely result in greater, unanticipated costs in the long run.

What Are Some Data Center Cooling Methods?

A data center can be cooled in one of two ways: air-based cooling or liquid-based cooling.

Air-Cooling

The first and most frequent type of air-based cooling is what’s known as the ‘cold aisle/hot aisle.’ The aim is to separate the cold and hot air. This is accomplished by turning the cold sides of each cabinet away from the hot sides, resulting in a convection system in which the cabinets cool themselves. However, this does not always work, and the data center management must pump in the more chilly air. Because this outdated, inefficient method has limits, many data centers are turning to new technologies.

Cold or hot air containment is a comparable procedure that aims to improve the older cold aisle/hot aisle system by physically isolating and restricting the servers so that hot and cold air do not mix. This is made easier by drawing air directly from the CRAC unit. This method is quite effective, however, it does have a problem with hot areas.

In-rack heat extraction is the final technology in the realm of air-based cooling. This approach seeks to accomplish the same purpose of evacuating hot air, but it does so by incorporating compressors and chillers into the rack. According to Schneider Electric, both Hot Aisle Containment (HACS) and Cold Aisle Containment (CACS) can generate savings. Hot aisle containment can save you up to 40% more than cold aisle containment. CACS traps cold air inside the system, allowing the rest of the data center to function as a hot-air return. The HACS, on the other hand, captures hot air and allows it to escape through an exhaust system.

Liquid-Cooling – Innovative Data Center Cooling Methods

The first liquid-based cooling technologies were water-cooled racks and servers. Water is utilized to cool the heated side of the cabinet in this way to lower the temperature. Water conducts electricity, thus it never comes into contact with the real components. The water is held in basins before being pumped via pipelines by cooling tower pumps. Behind a barrier, the water streams alongside the server. The cold water assists in lowering the temperature of the internal components. This technology works effectively, but many data center managers are hesitant to use it because of the potential of leaks.

Liquid immersion cooling is the next liquid-based cooling technology. Liquid coolant flows through the hot components of a server in this manner, cooling it down. The servers have entirely submerged themselves in the fluid. A dielectric fluid is used to do this. Although this fluid does not transmit electricity, if it is not utilized appropriately, it can cause damage to components.

4 Liquid Cooling Methods

Many innovative data center cooling methods that utilize liquid cooling have been developed in response to rising rack power densities. Here are a few of the more intriguing choices to keep an eye on.

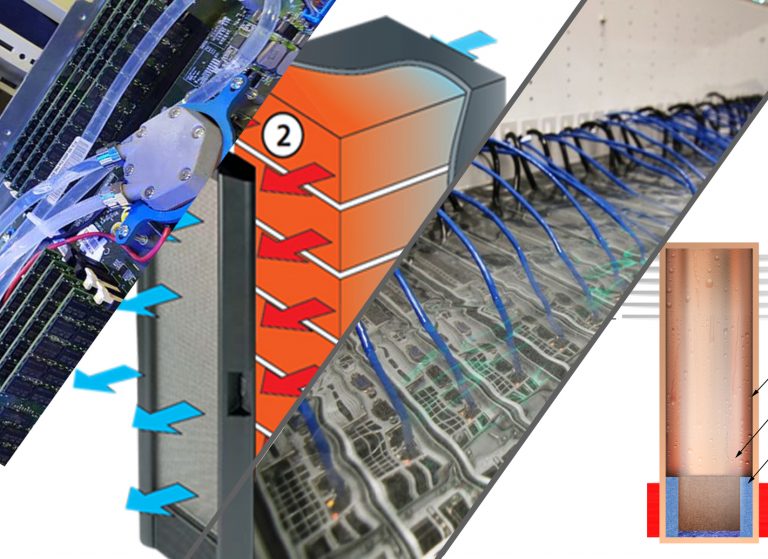

- Rear-Door Heat Exchangers – A liquid heat exchanger replaces the back door of the IT equipment rack in passive or active heat exchangers. To cool locations with heterogeneous rack densities, these systems can be utilized in conjunction with air-cooling systems.

- Direct-To-Chip Liquid Cooling – Direct-to-chip cool plates are placed on top of the board’s heat-generating components to remove heat via single-phase or two-phase evaporation units. About 70-75 percent of the heat generated by the equipment in the rack can be removed by these cooling technologies, leaving 25-30 percent to be removed by air-conditioning systems.

- Immersion Cooling – Immersion cooling systems, both single-phase and two-phase, submerge servers and other components in a thermally conductive dielectric liquid or fluid, removing the need for air cooling. This method maximizes the liquid’s heat transfer capabilities and is the most energy-efficient liquid cooling method available.

- Thermosyphons- Thermosiphon is a passive heat exchange technology that uses natural convection to circulate a fluid without requiring the use of a mechanical pump. In heating and cooling applications such as heat pumps, water heaters, boilers, and furnaces, a thermosiphon is utilized to circulate liquids and volatile gases.

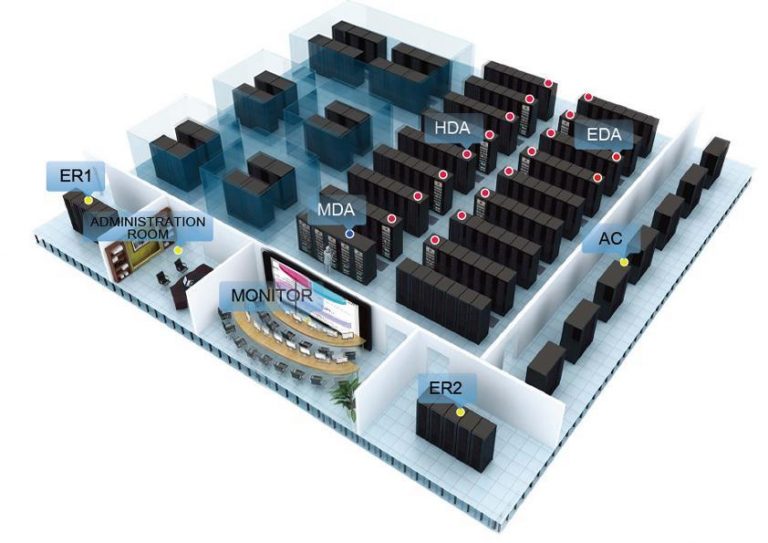

Data Center Monitoring Solutions

You must know that your cooling system is working, regardless of what cooling technology you have. Overheating can occur during any outage, resulting in thermal shutdowns and equipment damage. As you reduce your revenue, your costs will rise.

AKCP Monitoring Systems provides solutions for monitoring the ambient air temperature in server rooms and server racks. Temperature monitoring, on the other hand, does not guarantee that the problem will be discovered quickly. Perhaps the server room quickly warms up, leaving you scrambling to figure out what’s wrong. It may be anything from the server to the power supply to the cold air supply to a plenum obstruction to a malfunctioning fan. Soon, you’ll be debating whether or not to turn off servers before they overheat.

Server Rack Monitoring

- Cold Aisle

Place dual temperature and humidity sensor in your cold aisle to check the aisle temperature is not too hot or wasting energy by being too cold. - Cabinet Hotspots

Monitor temperature at the front and rear, top middle and bottom of the IT cabinet, as well as the temperature differential from the front to rear, T value. - Differential Pressure

Monitor for proper pressure differentials to check adequate airflow from cold to the hot aisle. Run more efficiently with correct pressure differential to prevent back pressure and hot air mixing back to the cold aisle. - Water Leaks, Access Control, And Sensor Status Lights

The addition of rope water sensors underneath raised access flooring, rack level, aisle access control, and sensor status lights complete the intelligent containment monitoring system. - Containment Maps In AKCPro Server DCIM

AKCPro Server includes dedicated desktops for displaying your containment monitoring system in an easy and visual interface. Install AKCPro Server on your own server, or take advantage of the built-in server that is embedded on every L-DCIM.

Liquid Cooling Monitoring

Liquid-cooled systems are gaining popularity. By pumping cold water or liquid refrigerant in closed systems in or near the racks, data center administrators may get greater cooling for less energy. They’re available in a variety of configurations, including device-mounted, rack-integrated, and rack attachments, but they’re expensive and necessitate a closer look at humidity. To avoid condensation, the data center must carefully regulate humidity levels in its hottest and coldest areas.

- Temperature Sensors

The temperatures of the server surface, liquid bath, and inlet and outlet coolant inside the coils were recorded and measured by a wireless temperature sensor or K-type thermocouple.

- Wireless Valve Control

Monitoring of pipe pressures and flow, controlling valves in buildings is important for the proper functioning of water distribution systems, preventing overpressure, and prolonging the life of pipes and pumps. With Wireless Tunnel pipe pressure sensors, flow meters, valve controllers and variable frequency drives for pumps you can do all this, and more with our centralized monitoring platform, AKCPro Server

- Power Monitoring Sensor

The power of the cooling unit (including the pump and the fan) was monitored using a power meter that can monitor and record real-time power consumption. The AKCP Power Monitor Sensor gives vital information and allows you to remotely monitor power eliminating the need for manual power audits as well as providing immediate alerts to potential problems. Power meter readings can also be used with the sensorProbe+ and AKCPro Server lives PUE calculations that analyze the efficiency of power usage in your data center. Data collected over time using the Power Monitor sensor can also be viewed using the built-in graphing tool.

Reference Links:

Innovative Solutions for Data Center Cooling

How Data Center Cooling is Changing

https://www.vertiv.com/en-asia/about/news-and-insights/articles/blog-posts/data-center-cooling-innovation-what-experts-discovered-in-the-vertiv-customer-experience-center/

https://guidehouseinsights.com/news-and-views/increasing-demand-for-data-center-cooling-drives-innovative-solutions

- About the Author

- Latest Posts

For over two decades at AKCP, I have been focused on a single mission: bringing complete visibility, security, and efficiency to the world’s critical infrastructure.

I believe that in the modern data center, AI is only as good as the data it receives. My goal is to ensure facilities have the precise sensor facts needed to control AI opinions, ultimately reducing PUE, releasing stranded capacity, and ensuring maximum uptime.