Practical Steps to Cut Data Center Costs

The cost of operating data centers has been rising year on year. An average data center construction cost is 10 to 12 million US dollars ($). With additional design specifications, it can raise even more, and this is before equipment such as servers and networking is installed. The hard part about data center constructions is the costs are front-loaded in the initial deployment phase.

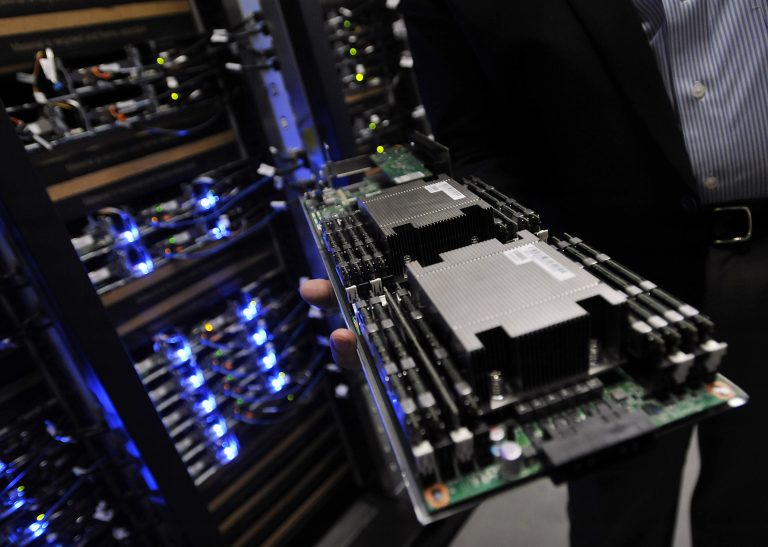

In terms of actual operations, data centers further invest in sophisticated computing hardware. Hyperscale data centers need additional capital rollout on operational functions. This includes funds for cooling systems, alternate energy sources, and even artificial intelligence (AI).

While we are all for bigger and better outputs, the assets needed will require huge financing. As such, alternate routes are needed to limit increasing costs. Some experts claim that design is one factor to consider when driving down costs. It can essentially result in a 25% decrease in costs.

Strategies To Cut Data Center Costs

Maximize Modular Solutions

Integrating modular solutions to a data center means combining parts into individual units (modules). The modular approach enables chunking the scale into feasible means. Often, the scale will only happen when there is a strong demand for it. As part of modular solutions, hardware-specific strategies are of prime consideration. Some of the practical steps in incorporating modular solutions are as follows:

Measuring Efficiency Gains

To optimize modular solutions, lifecycle planning is vital. As modular scaling is dependent on need, expansion should be justified only when there are evidence-based efficiency gains. This ensures that capital for new hardware does not go to waste.

Setting Targets

An easier way to establish data for efficiency gains is to set efficiency targets. Reviewing the historical energy data will provide standard targets if the prime consideration is energy consumption.

An easier way to establish data for efficiency gains is to set efficiency targets. Reviewing the historical energy data will provide standard targets if the prime consideration is energy consumption.

Invest In Innovation

Innovation does not precisely mean building solutions from the ground up. Owing to the nature of the modular approach, innovation comes from thoroughly choosing the best fitness equipment. A sufficient server load and a particular rack-level cooling unit are fundamental innovations in designing a modular solution.

Analyzing Data Storage

One of the ways to cut data center costs is to pinpoint data volume. A fundamental principle in data storage goes “purge whenever possible.” Before purging, it is essential to filter data and examine them. What is worth keeping and for how long. This can aid better data lifecycle strategies eventually.

The capacity benefits of HDDs and flash storage may not be the most feasible given the data volume. That is why some data centers are resorting to cold storage. This is where rarely accessed, but pertinent data are stored. The utilization of cold storage will push for energy overhead to decrease.

Choose A Supplier With Better Support Value

The problem with new hardware purchases is the limited service life. Hardware bought from four years ago will be past their service timeline. By this time, new features are already introduced in the market. Purchasing these new features for better operational value will incur support costs.

This is where third-party maintenance providers are valuable. Their edge highlights support and maintenance propositions that give data centers better value. Often, they offer new features at half the cost. Third-party providers are also efficient players in contract management.

Hardware Lifespan Is A Priority

When purchasing hardware, one should consider its post-warranty case. For many traditional operators, a hardware lifespan is dependent on the support value, which essentially lasts five years. Some data centers will surely maximize hardware by running it until it is done.

There is a practical solution to both approaches. Major manufacturers nowadays are keen on providing value on a product-by-product basis. This means data centers can deliberately assess the IT assets that best suits their requirement. In essence, maximizing the return on their investment. And because support value is different for each hardware, risk profiles are different. They are ultimately providing a better hardware lifespan.

Be Proactive

Putting forth a proactive stance in terms of data center operation might be the most basic management requirement. As data center managers, we are often hounded by constant support requests. Increased maintenance and support checks are an additional financial burden in return. This would require additional human resources as well. Sometimes proactively seeking newer innovations that will limit wasteful processes can help increase savings.

A proactive approach can influence budget priorities to cut down data center costs. This can be depicted in more sound decision-making considering the most cost-saving option.

Look For Brand-Agnostic Units

Let’s face it, equipment will get replaced once it runs its course. The best thing to note is to get the product that can provide the most cost-saving return. You can do this by:

- Opting Out Of Big-Name Hardware. There are cheaper alternatives than a known brand in the market. Of course, one should make sure it’s reliable before purchase. There are non-household names that can offer the same performance. The most significant advantage to this equipment is they are brand-agnostic. Meaning they can fit a universal specification.

- Knowing Markup Prices. Hardware manufacturers often offer goods at a markup. Having some market knowledge on hardware prices will bode better for negotiations. Understanding the trend on markup can be an advantage in asking for discount prices.

Virtualization

The ultimate upside of virtualization is running more applications with little hardware input. In this case, overall costs are slashed to significantly low amounts. Virtualization works by running applications to underused servers. It negates the common practice of 1:1 ratio whereby running one application per server.

The ultimate upside of virtualization is running more applications with little hardware input. In this case, overall costs are slashed to significantly low amounts. Virtualization works by running applications to underused servers. It negates the common practice of 1:1 ratio whereby running one application per server.

However, a point to take in is to ensure a comprehensive review of the data center before transition. Server inventory is a must to make sure it fits the virtualization requirements. The move to virtualize will require better planning. Often, the transition follows a phase. It is always hard to go all out and virtualize. As such, some data centers go through a para-virtualized arrangement first.

Optimizing Best Practices

To cut data center costs may not be a realistic proposition for a stand-alone data center. As a complex system, data centers require staffing. Sometimes, staffing costs can get high. This is where partnership and collaboration can help. Outsourcing partners can provide the necessary expertise on a pre-need arrangement. This will negate payment for full-time staff.

Often, operational value is chunked down into components. Whether monitoring, maintenance, or any other support task, the external resource can fill in the gap. The advancement in machine learning further highlights this. Additional administrators may not be the immediate solution with much better computing capacities.

The value of collaboration in a highly competitive industry cannot be understated. However, all data center operators strive for best practices for improved results. While competition is healthy, collaborating to improve together is noteworthy. When operators come together, it influences other actors to get a piece of the pie but with the premise to offer something. Hence, a win-win for all.

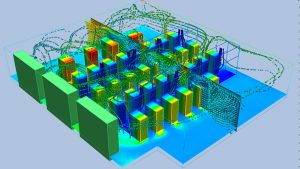

- Cut Costs By Monitoring

As an integral part of data center operations, monitoring solutions incur significant capital investment. Sensor infrastructures measure and monitor the operating environment for efficient turnout. However, traditional wired infrastructure for monitoring is expensive. This is the advantage of wireless technologies. Cost savings are significant in lower costs for deployment.

As an integral part of data center operations, monitoring solutions incur significant capital investment. Sensor infrastructures measure and monitor the operating environment for efficient turnout. However, traditional wired infrastructure for monitoring is expensive. This is the advantage of wireless technologies. Cost savings are significant in lower costs for deployment.

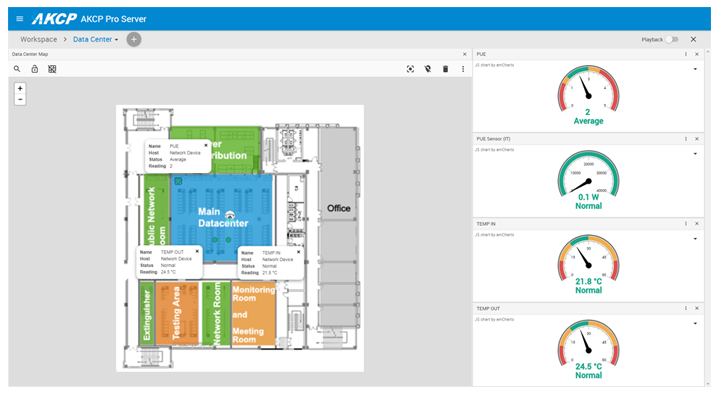

AKCP has wireless data center monitoring solutions with specific features to meet data center monitoring requirements. AKCP wireless sensor technology provides long-range, high-penetration, low-power sensor communication. It provides the following monitoring capacities:

- Message Acknowledgment

- Instant Threshold Broadcasting

- Lower Bandwidth Utilization

AKCP wireless sensor is the world’s first LoRa based monitoring system with features specific to data center monitoring. It is comprised of the following:

- Wireless Tunnel Sensor

- Wireless Tunnel Gateway Server

- ACKPro Server

AKCP Wireless Tunnel Sensors can be configured to connect with the nearest gateway in terms of reliability and security. It can buff internal sensor data and upload it as soon as there is a connection. This ensures no data is lost. In line with this, sensors require a gateway

communication pathway. The wireless tunnel gateway server can collect and store data from 30 sensors. Lastly, AKCP has an end-to-end data center monitoring solution called ACKPro Server DCIM. It provides comprehensive ground visibility of all monitoring data.

What Is Important?

Data centers need to keep pace with innovations. But sometimes, innovations are done at the expense of higher costs. While subscribing to enhanced architecture is essential, the relative cost that goes with it may not promise significant returns. Advocating to cut data center costs should champion a thorough inspection of where the data center is moving forward. As mentioned, expansion should be supported by concrete demand.

While we are working towards increasing operational demand, our current data center operations should first be the conduit of our action. This means that current cases should be highlighted and motivated by future improvements. As basic as setting efficiency targets can potentially propel better savings. Given the current condition, the choices we make will stand the test of time onwards to a successful operational future.

Reference Links:

https://www.datacenterdynamics.com/en/marketwatch/cutting-data-center-construction-costs-use-supporting-infrastructure-ptss-peter-sacco/

https://www.parkplacetechnologies.com/blog/6-practical-fast-action-ways-to-cut-data-center-costs/

https://www.informationweek.com/cloud/cut-data-center-costs-without-sacrificing-performance

- About the Author

- Latest Posts

For over two decades at AKCP, I have been focused on a single mission: bringing complete visibility, security, and efficiency to the world’s critical infrastructure.

I believe that in the modern data center, AI is only as good as the data it receives. My goal is to ensure facilities have the precise sensor facts needed to control AI opinions, ultimately reducing PUE, releasing stranded capacity, and ensuring maximum uptime.