What You Should Know About Data Center Cooling Technologies When Selecting a Co-Location Provider

Data Centers are huge power consumers. Apart from the IT load, the cooling system is one of the biggest energy hogs in a data center. Worldwide, the combined data center power consumption accounts for around 3% of the worlds total energy usage. So as you can see it is quite significant, not only to he environment, but to operational costs.

For customers in co-location data centers it is vital to understand the cooling system and its associated energy costs. This will allow you as the client to understand the potential costs as your requirements scale. With increased capacity comes increased energy usage and cooling requirements. If the data center is well designed and efficiently cooled it will dramatically decrease your operational costs.

Data Center Power Requirements

Prior to deploying a data center, or renting co-located space it is important to first make an assessment of the power requirements. For example, deploying several high power servers in a high density cabinet will cost considerably more than having a number of less powerful servers in a low density setup. You should ensure that your PDU (Power Distribution Units) are capable of supplying the required number of amps the equipment will draw. Backup power systems such as UPS’s should be sized accordingly and be sufficient to run the servers until backup generators kick in.

When selecting a co-location facility they will have specifications as to the amount of power they can provide to each cabinet. if you are planning on a high density deployment you would be looking for between 10-20 kW per cabinet. Even if you don’t require this much power initially, you should always keep in mind your future needs and the scalability. It is always easier to scale up and add more servers than migrate to another facility.

Data Center Cooling Technologies

the high cost of cooling systems are one of the primary reasons that people seek to migrate to co-location data center services. In house data centers are usually in-efficient in their design and operation meaning it is often cheaper to outsource than to host in house, especially as your computing needs increase. Large data center facilities are well invested in monitoring systems to provide feedback and data on their operations, allowing them to make adjustments to improve efficiency. They employ the best in data center design and construction. The likes of Google in their hyper scale data enters are even employing Artificial Intelligence to manage their cooling systems. These systems allow for predictive analysis of data center load requirements spooling up and down servers and cooling capacity in anticipation of high and low traffic.

There are a wide variety of methods and technologies that can be employed for data center cooling.

Calibrated Vectored Cooling (CVC). This is a method of cooling high density servers. It ensure optimal pathway of airflow through the equipment. This makes sure that the cold air moves across the major components of the circuit boards. This results in fewer fans being required to draw air through the servers and more efficient cooling.

Chilled Water System. Centralized cooling of the data center hall, commonly deployed in mid to large sized data centers. Chilled water cools air that is brought in by the Computer Room Air Handlers (CRAHs). The chilled water is supplied by the Chiller Plant located in another area of the data center.

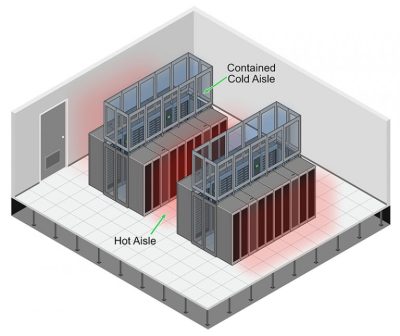

Hot / Cold Aisle Design. This is a common design method used in modern data centers. The cabinets are arranged in alternating hot and cold rows. The cold aisles are cooled so the servers can draw in the cool air. The hot air is expelled to the hot aisle. This stops the hot air mixing with the cold air. The hot air is then removed and returned to the cooling system to remove the heat from the air before being returned to the cold aisle.

Computer Room Air Conditioner (CRAC). These are similar to conventional gas expansion cooling systems found in homes (TXV). They are suitable for smaller data centers. They are inefficient in their operation, but come at a low initial capital expenditure.

Computer Room Air Handler (CRAH). These are part of the chilled water plant system. The chiller supplies cold water to the cooling coils inside the CRAH. The CRAH has fans that draw in air from outside, through filtration systems and cools this air before being blown into the data center hall or cold aisle containment. As these are cooling outside air, they are more efficient when used in places with colder year round climates.

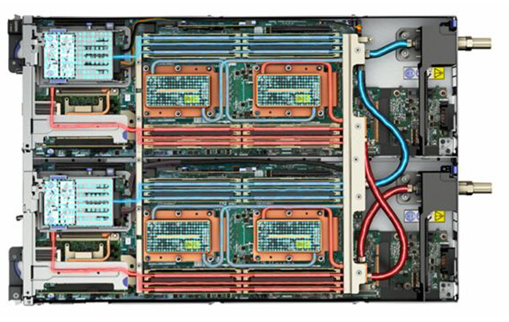

Direct To Chip Cooling. This method of cooling is one of the malt efficient in terms of cooling servers. It utilizes a network of pipes that deliver cooled water to a cold plate that is incorporated to the main board of the server. The metal plate acts as a heat sink, extracting heat from the chips and the cooled water carries this away to the chiller plant.

Immersion Cooling. This is a relatively new technology and is quite innovative. It uses a non-conductive and flammable dielectric fluid that the complete server is submerged in. This technology is not common.

Free Cooling. When a data center is located in a cold climate, outside cold air can be introduced to the data center hall. Using this naturally cold air that is then expelled to the outside is an extremely efficient form of cooling. Only circulating fans and filters are required, without the costs of running a chiller plant, compressors and pumps.

Mistakes Made In Data Center Cooling

Poor Layout. Cabinet should be laid out in a hot/cold aisle configuration, even if there is no segregation. The air handlers should be placed at the end of each row. Having an “island” configuration with air handlers around the perimeter of the room is highly in-efficient.

Empty Cabinets. Having empty cabinets can affect airflow, especially in hot/cold aisle containment systems. Empty cabinets allow cold air and hot air to mix. If you have empty space in cabinets they should closed off with blanking panels to maintain containment and pressure differentials.

Raised Floor Leakage. Raised access flooring were popular method of data center design with cold air and callings being delivered through ductwork underneath the raised access flooring. If the raised flooring as leaks it can cause a loss of pressure as well as allowing dust and humidity to enter the cold aisle. Pressure differential sensors can be used to monitor for leaks.

Cable Openings. There are openings in cabinets and floors for cables to come through. These openings should be sealed, to prevent cold air escaping and pressure loss. Openings can be salted with cable brushes. Blanking panels with brushes can also be used to allow the bringing of cables from front to rear of the cabinets.

Air Handler Conflicts. When you have multiple air handlers trying to control the environment you can have a situation where one AHU is trying to dehumidify the air, while another is trying to humidify. This results in wasted energy as the two units fight each other. Through proper planning and management of AHU placement and humidity control points you can reduce the occurrence of this.

Conclusion

Power demands in data centers are continuing to increase. AI computing requires large amounts of energy. As companies become increasingly reliant on technologies, there is a shift from in house inefficient computer rooms and mini data centers too renting space in co-located data centers. It is important however to have a thorough understanding of the cooling systems deployed so you can evaluate the costs involved.

As it is difficult to migrate assets and data from one facility to another, it is wise to ensure that the co-locating facility has sufficient power and cooling for your present and future needs.

AKCP provides a complete monitoring solution for the data center.

- About the Author

- Latest Posts

For over two decades at AKCP, I have been focused on a single mission: bringing complete visibility, security, and efficiency to the world’s critical infrastructure.

I believe that in the modern data center, AI is only as good as the data it receives. My goal is to ensure facilities have the precise sensor facts needed to control AI opinions, ultimately reducing PUE, releasing stranded capacity, and ensuring maximum uptime.