10 Tips For An Efficient Power Consumption in Data Center

1. Turn Off Idling Motors.

2. Servers and Storage Virtualization

Virtualization won’t be the salvation for everyone. Your data center might have to be designed for periodic peak loads. In that case, having underutilized, idle hardware is par for the course. But, virtualization can bring great benefits for most data centers.

3. Consolidation Strategy

- Tiered Storage

- Consolidated Storage

4. Turn on the CPU’s Power-Management Feature.

5. Efficient Power Supply for IT Equipment

After the CPU, the second biggest culprit in power consumption is the power supply unit (PSU). This requires about 25 percent of the server’s power budget for that task. The third is the point-of-load (POL) voltage regulators (VRs) that convert the 12V DC into the various DC voltages. Those are required by loads such as processors and chipsets.

6. Use High-Efficiency UPS

IT equipment in the data center is not directly connected to the facility’s power supply. Usually, their power passes through the uninterrupted power supply (UPS) and power distribution units (PDU) distribute of required voltage per rack or server.

7. Cooling Best Practices

Up to 60% of the data center’s utility bill goes to cooling systems alone. To anyone may think that this is way too much energy to spend for one function. This is due to inefficiently deployed cooling system equipment and not running them at their recommended conditions.

Your facility might already have some resources in cutting cooling costs, all you have to do is use them accordingly:

- Use hot aisle/cold aisle enclosure configurations. By alternating equipment so there is an aisle with a cold air intake and another with a hot air exhaust, you can create a more uniform air temperature.

- Use blanking panels inside equipment enclosures so that air from hot aisles doesn’t mix with air from cold aisles.

- Seal cable outputs to minimize “bypass airflow,” whereby cool air is short cycling back to cooling units instead of circulating evenly throughout the data center. This phenomenon affects as much as 60 percent of the cool-air supply in computer rooms.

- Orient computer room air-conditioning units close to the enclosures and perpendicular to hot aisles to maximize cooling where it’s needed most. Further optimization of cooling systems can be achieved by using air-handlers and chillers that use efficient technologies, such as variable frequency drives (VFDs), air- or water-side economizers, and humidity and temperature settings, according to the American Society of Heating, Refrigerating, and Air-Conditioning Engineers (ASHRAE) guidelines.

8. Conduct An Energy Audit of your Data Center

9. Prioritization to Reduce Power Consumption

- Identifying and powering down underutilized equipment.

- Increasing equipment utilization through virtualization and consolidation.

- Selecting high-efficiency IT equipment.

- Upgrading UPSs to higher-efficiency technology.

- Implementing energy-efficient practices for cooling.

- Adopting power distribution at 208V/230V.

- In a greenfield data center, or in a major expansion/upgrade of an existing data center:

- Get executive-level sponsorship and form a cross-functional team to develop an energy strategy for IT operations.

- Include energy efficiency as a key requirement in design criteria alongside reliability and uptime.

- Consider energy efficiency in calculations of the total cost of ownership when selecting new IT, backup power, and cooling equipment.

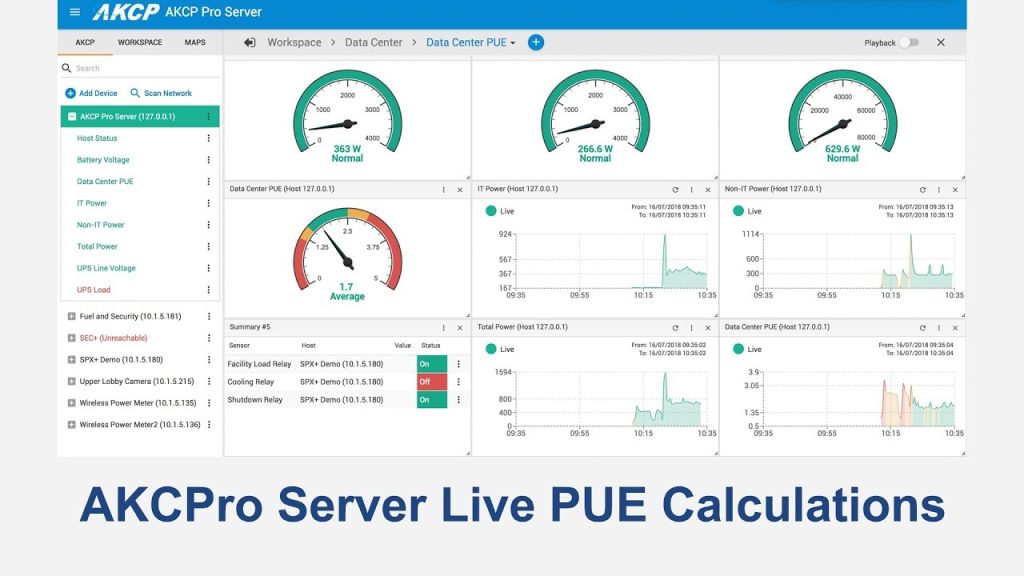

10. Easy Data Center Power Monitoring With AKCP

Monitor PUE in Real-Time.

pue calculator measure the power consumed by IT and non-IT power enables operators to benchmark and compare power consumption from a single or distributed deployment through Power Usage Effectiveness (PUE) calculations.

Evaluate future cost-saving measures using the initial PUE benchmark. PUE and other server room metrics can be tracked over time to help identify server room issues and opportunities for cost savings.

Automatic PUE Calculations.

Calculate PUE values using data polled from numerous devices. A real-time PUE value can be displayed rack by rack or for an entire server room. Power monitoring sensors collect all the data from IT and non-IT loads that are used in this calculation.

Reference Links:

https://datacenters.lbl.gov/sites/all/files/fact-sheet-ee-server-rooms-3.pdf

https://www.sunbirddcim.com/blog/top-three-ways-improve-data-center-power-monitoring

https://www.vxchnge.com/blog/growing-energy-demands-of-data-centers

https://www.powertec.ca/tips-for-saving-electricity/

- About the Author

- Latest Posts

For over two decades at AKCP, I have been focused on a single mission: bringing complete visibility, security, and efficiency to the world’s critical infrastructure.

I believe that in the modern data center, AI is only as good as the data it receives. My goal is to ensure facilities have the precise sensor facts needed to control AI opinions, ultimately reducing PUE, releasing stranded capacity, and ensuring maximum uptime.