The Evolution of Data Center Cooling: Liquid Cooling

Introduction

Cooling An Entire Digital Infrastructure

What Does This Imply For You?

Liquid Cooling Trends

The Game Changer

Cooling

Power

Technology and business leaders are encouraged to supply more technology solutions while maintaining efficiency levels. The problem is that data center electricity usage continues to rise around the world. According to a recent US Department of Energy analysis, data centers in the United States are expected to need more energy in the future, based on current trend predictions. Since the year 2000, this trend has been progressively increasing.

Cloud

Supporting IT Developments

It’s crucial to remember that data centers are responsible for supporting new and developing use-cases. Colocation and hyper-scale providers now enable certain advanced solutions to typical converged, hyper-converged, cloud, and even virtualization systems. High-performance computing (HPC), is used for research and data analysis. These systems are being used within the confines of traditional data centers. Cooling HPC systems necessitates a different method. For example, in 2018, data center leaders at the National Renewable Energy Laboratory’s (NREL) Energy Systems Integration Facility implemented a permanent cold plate, liquid-cooled rack solution for high-performance computing (HPC) clustering as part of a partnership with Sandia National Laboratories (ESIF).

- Cool the information technology equipment using direct, component-level liquid cooling with a power usage effectiveness design target of 1.06 or better;

- Capture and reuse the waste heat produced; and

- Cut the water used as part of the cooling process. There is no compressor-based cooling system for NREL’s HPC data center. Cooling liquid is supplied from cooling towers.

HPC isn’t the only place where liquid-cooled data centers and specific use-cases are being developed. New liquid-cooled systems are being investigated to meet emerging requirements in machine learning, financial services, healthcare, CAD modeling and rendering, and even gaming, to preserve sustainability, reliability, and the highest levels of density AKCP Supports The Evolution Of Data.

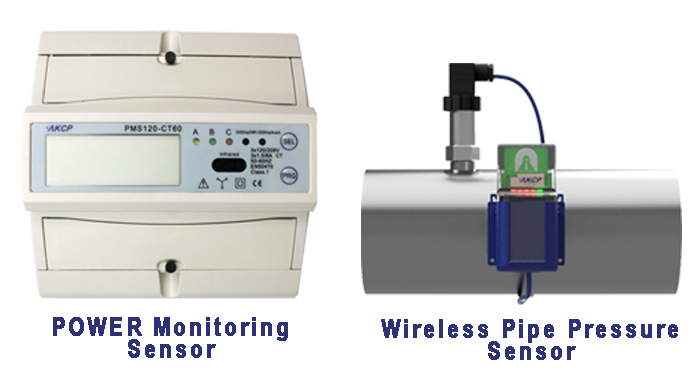

Power Monitoring Sensor

A power meter that can monitor and record real-time power usage must be used to monitor the cooling unit’s power. The AKCP Power Monitor Sensor provides critical data and allows you to remotely monitor power, obviating the need for manual power audits and delivering instant notifications to possible problems. SensorProbe+ and AKCPro Server live PUE calculations may also be utilized with power meter readings to assess the efficiency of power use in your data center. The built-in graphing tool may be used to display data gathered over time using the Power Monitor sensor.

Wireless Pipe Pressure Monitoring

The pressure in the tank was monitored by an automatic pressure relief valve with a pressure sensor. Digital pressure gauge for monitoring all kinds of liquids and gasses. Remote monitoring via the internet, alerts, and alarms when pressures are out of pre-defined parameters. Upgrade existing analog gauges.

Reference Link:

Evolution of Cooling Technologies in Data Center Space

https://datacenterfrontier.com/the-evolution-of-data-center-cooling/

The Evolution of Datacenter Cooling

- About the Author

- Latest Posts

For over two decades at AKCP, I have been focused on a single mission: bringing complete visibility, security, and efficiency to the world’s critical infrastructure.

I believe that in the modern data center, AI is only as good as the data it receives. My goal is to ensure facilities have the precise sensor facts needed to control AI opinions, ultimately reducing PUE, releasing stranded capacity, and ensuring maximum uptime.