Future of Data Center Cooling

In the digital era, data centers have become an indispensable part of the world, catering to individuals, businesses, cities, and countries. With the increasing reliance on online information and the Internet of Things (IoT), high-density data centers are on the rise. However, this growth demands more efficient cooling systems to handle the escalating energy consumption. What is the future of data center cooling technologies?

In this article, we will explore various cooling solutions for data centers, with a particular focus on temperature sensors and their role in optimizing cooling efficiency.

Importance of Data Center Cooling

Data center cooling is crucial for maintaining a favorable environment for equipment and preventing issues like hotspots, overheating, and air mixing. Research indicates that nearly 40% of operating expenses are allocated to cooling, and the market projects a 3% annual growth rate between 2020 and 2025.

Failure to provide adequate cooling can lead to hotspots, overheating, and hot and cold air mixing. These are the primary causes of data center downtime in which consequences interrupt business’ operations.

Using chillers and computer room air conditioning (CRAC) units was the simplest solution in increasing cooling efficiency. However, as the data center, IT loads increase every year, several other solutions and devices are brought in to secure the future of data center cooling.

Hot and Cold Aisle Layout

One of the earliest and effective methods used in data centers is the hot and cold aisle layout introduced by IBM in 1992. This layout separates the hot and cold air into two different aisles, resulting in significant cooling savings of up to 35%. While ideal for new data centers, retrofitting existing facilities can be costly and may cause downtime.

Aisle Containment

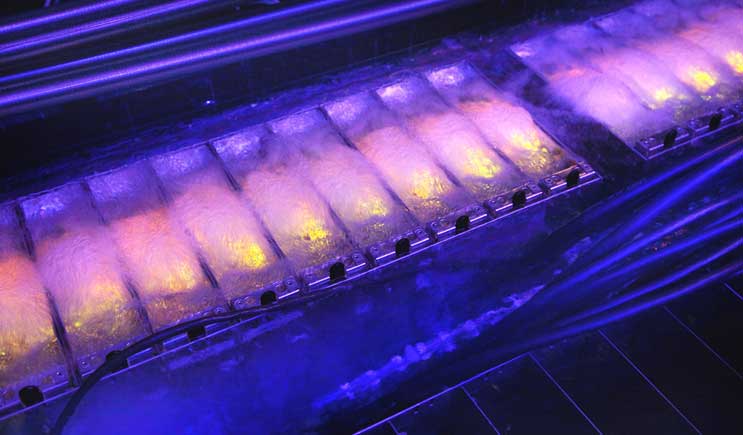

Photo Credit: googleusercontent.com

As rack power densities increased to 10 kW and beyond, containment systems were introduced in modern data centers to improve cooling efficiency. Aisle containment methods include hot aisle containment, cold aisle containment, and chimney containment, each offering its unique advantages and consequences.

- Hot Aisle Containment – isolates the hot air from the server using doors at the end of the racks or ducts from the hot aisle to the cooling units. As the air rises, the barriers provide a clear path for upward hot air through the cooling units. This ensures the warmest possible air return. Both a raised floor and slab environments are workable on this strategy. However, this can make the aisle immensely hot, making it uncomfortable for workers checking it.

- Cold Aisle Containment – This strategy contains the cold aisle making the rest of the room a large hot air return plenum. Plastic curtain materials, doors at the end of the racks, and partitions on the ceiling are used as barriers. While this is easier to implement, it has more drawbacks compared to the hot aisle containment.

- Chimney Containment – It contains air through a solid metal chimney directing the hot return air to the overhead return plenum. Chimneys are installed on the back of a single rack or system to the ceiling air return plenum. This takes advantage of the natural rise of hot air. Pros include flexible configuration and elimination of hot air from all occupied spaces.

In-Rack Heat Extraction

With rack power densities exceeding 10 kW, in-rack heat extraction became a mainstream solution. This method involves extracting hot air generated by servers inside the racks before it circulates into the server room. While efficient, achieving very high computational density per rack can be challenging.

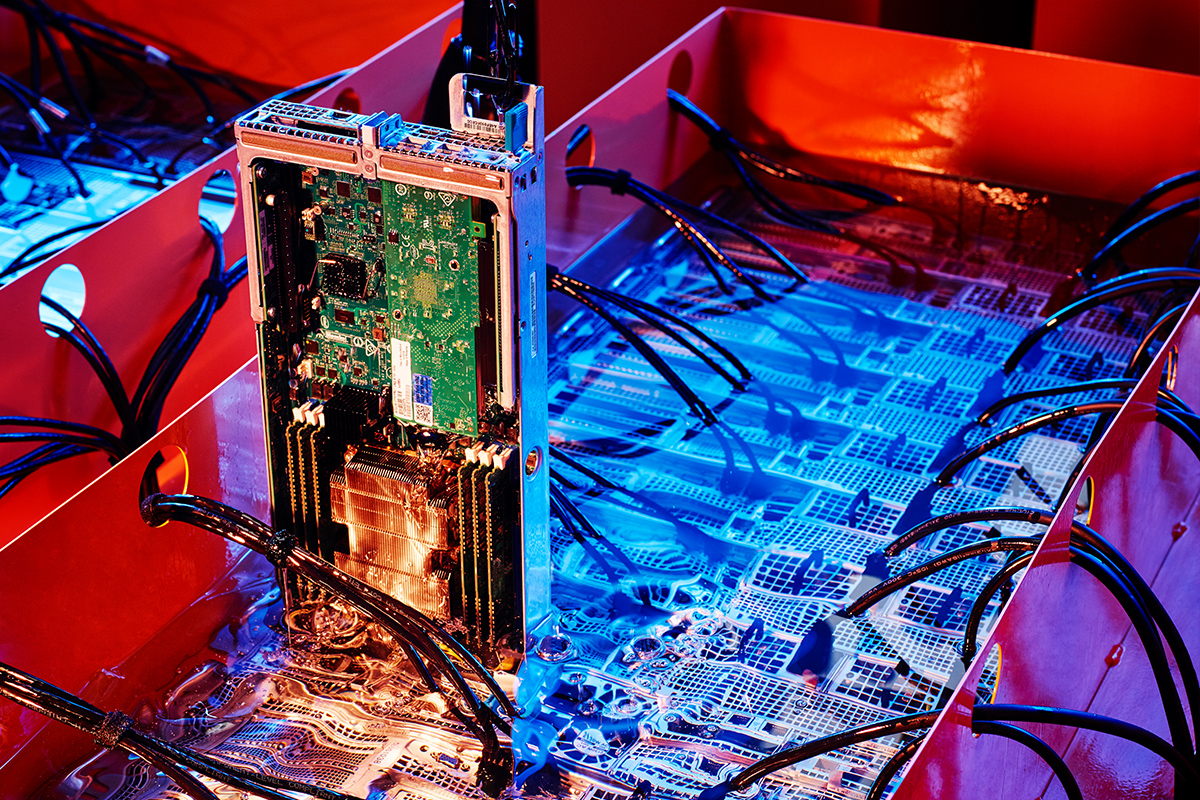

Liquid Cooling

To address the limitations of air-cooled methods, liquid cooling configurations were introduced as power densities reached 20 kW. Liquid cooling offers improved efficiency, lower energy consumption, and scalability. It can be implemented through full immersion cooling and direct-to-chip cooling methods.

Photo Credit: dug.com

Monitoring the Future with Temperature Sensors

The future of data center cooling lies in the effective monitoring of cooling systems. Temperature sensors, such as wireless temperature sensors and K-type thermocouples, play a vital role in measuring server surface temperatures, coolant temperatures, and other critical data.

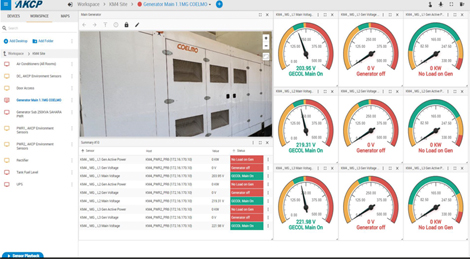

Power Monitoring and Future of Data Center Cooling with Efficiency Analysis

Monitoring power consumption is equally important for optimizing cooling efficiency. Power monitoring sensors can track real-time power consumption of cooling units, pumps, and fans. This data can be used to analyze the data center’s power usage effectiveness (PUE) and identify potential issues.

With a comprehensive sensor monitoring system a complete data center digital twin can be created. A virtual representation with sensorCFD™ analysis improves efficiency and reduces operational costs.

Conclusion

While predicting the future of data center cooling is challenging, it becomes achievable with thorough data analysis provided by sensors. Investing in adaptable monitoring solutions, such as those offered by AKCP, is essential for data centers. AKCP’s sensor solutions are particularly suitable for facilities with critical assets, offering remote capabilities for easy monitoring and ensuring the best possible status for your data center.

To learn more about AKCP’s monitoring solutions, reach out to [email protected]!

Reference Links:

https://medium.com/ken-m-lai/what-is-hot-cold-aisle-in-data-centre-8f5416775f59

https://www.colocationamerica.com/blog/hot-vs-cold-aisle-containment

https://www.42u.com/solutions/data-center-containment/chimney-systems/

https://www.vxchnge.com/blog/data-center-cooling-technology

https://submer.com/blog/datacenter-cooling-methods/