Cooling Tips for Data Center Efficiency

Cooling Tips for Data Center Efficiency

Monitor Cooling Systems

Eliminate Hot Spots in Data Centers

Hot spots in data centers are generally caused by airflow issues. Wherein the cool air does not reach its target IT equipment. To prevent it, the air must be spread evenly and redistribute heat loads into every rack. Monitor the temperature in the bottom, middle and top racks by installing temperature sensors. Place these temperature sensors in each rack, three sensors per rack.

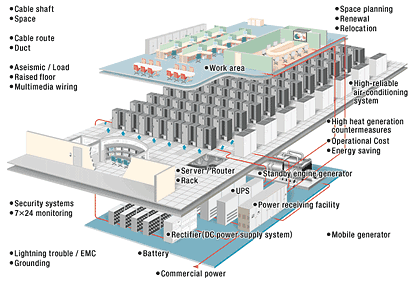

Optimize Data Center Design

If your data center has a raised floor, place the hottest racks in front of the perforated tiles. You may also change to other perforated tiles to match the airflow to the heat load. Do not place CRAC/CRAH units close to the perforated tiles. This will lead to a “short circuit” of air. The cool air will flow back immediately into CRAC/CRAH units. Hence, taking sufficient supply of cool air to the facility.

If your data center has a raised floor, place the hottest racks in front of the perforated tiles. You may also change to other perforated tiles to match the airflow to the heat load. Do not place CRAC/CRAH units close to the perforated tiles. This will lead to a “short circuit” of air. The cool air will flow back immediately into CRAC/CRAH units. Hence, taking sufficient supply of cool air to the facility.

Bonus Energy Efficiency Tips

AKCP Monitoring Solutions

Environmental Monitoring

Monitor all your temperature, humidity, airflow, water leak to secure your data center’s environmental status. Configure rack maps to show the thermal properties of your computer cabinet. Check the temperature at the top, middle, bottom, front, and rear. As well as temperature differentials.

Monitor all your temperature, humidity, airflow, water leak to secure your data center’s environmental status. Configure rack maps to show the thermal properties of your computer cabinet. Check the temperature at the top, middle, bottom, front, and rear. As well as temperature differentials.Wireless Thermal Mapping of IT Cabinets

Wireless thermal mapping of your IT cabinets. With 3x Temperature sensors at the front and 3x at the rear, it monitors airflow intake and exhaust temperatures, as well as providing the temperature differential between the front and rear of the cabinet (ΔT) Wireless Thermal maps work with all Wireless Tunnel™ Gateways.

Power Monitoring

Wireless Current Meter

AKCP offers a wide range of solutions for all your data center needs. With over 30 years of experience in professional sensor solutions. AKCP created the market for networked temperature, environmental, and power monitoring in data centers. AKCP is the world’s oldest and largest manufacturer of network wired and wireless sensor solutions. For more details, visit AKCP at akcp.com.

Monitor single-phase, three-phase, generators, and UPS battery backup power. AKCP Pro Server performs live (PUE) calculations. So you have a complete overview of your power train. As well as how adjustments in your data center directly impact your PUE.

Conclusion

Reference Links:

https://gcn.com/articles/2019/01/04/data-center-cooling.aspx

https://www.datacenterdynamics.com/en/opinions/top-summer-cooling-tips-your-data-center/

https://tc0909.ashraetcs.org/documents/ASHRAE%20Networking%20Thermal%20Guidelines.pdf

https://www.akcp.com/blog/optimizing-data-center-cooling/

- About the Author

- Latest Posts

For over two decades at AKCP, I have been focused on a single mission: bringing complete visibility, security, and efficiency to the world’s critical infrastructure.

I believe that in the modern data center, AI is only as good as the data it receives. My goal is to ensure facilities have the precise sensor facts needed to control AI opinions, ultimately reducing PUE, releasing stranded capacity, and ensuring maximum uptime.