High-Density Data Center Management

Integrated Data Center Management

- Building Management System (BMS) – Access control, video surveillance, fire alarms, HVAC control, programmable lighting. Electric power management is also a component of this system

- IT System Management – Hardware, software, database, and network

- Data Center Infrastructure Management (DCIM) – Support the center’s hardware and software such as power subsystems, uninterruptible power supplies (UPS). It also includes cooling systems and connections to external networks.

Light Out Data Center

Advantages of Light Out Data Center

- Creativity on the Design – The data halls can be created freely without considering human occupants.

- More Efficient Cooling – The facilities can be operated with higher temperatures and humidity without causing a business interruption.

- Minimize Human Errors – A single error such as mislogging data can cause problems. According to a study by Ponemon Institute, human error is the second-highest cause of data center downtime.

- Improved Visibility – It provides visibility to the racks to know if servers are aligned and group together correctly.

Challenges for High-Density Data Center Management

Risk of Downtime

Power Capacity

Energy Efficiency

Deploying New IT Equipment

Infrastructure Monitoring

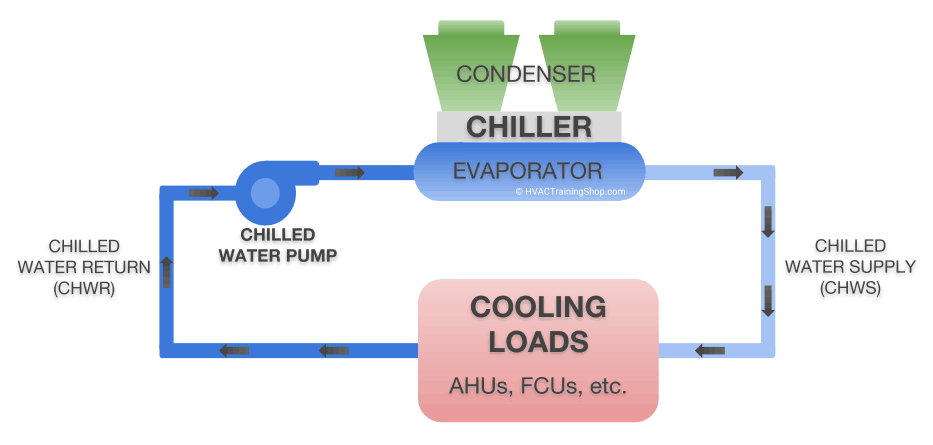

Cooling

Chilled Water Loop System

Controlling Chilled Water Loop System

Wireless Rack Temperature Monitoring

Datacenter monitoring with thermal map sensors helps identify and eliminate hotspots in your cabinets by identifying areas where temperature differential between front and rear are too high. Thermal maps consist of a string of 6 temperature sensors and an optional 2 humidity sensors. Pre-wired to be easily installed in your cabinet, they are placed at the top, middle, and bottom – front and rear of the cabinet. This configuration of sensors monitors the air intake and exhaust temperatures of your cabinet, as well as the temperature differential from the front to the rear.

Obstructions within the cabinet

Cabling or other obstructions can impede the flow of air causing high-temperature differentials between the inlet and outlet temperatures. The cabinet analysis sensor with pressure differential can also help analyze airflow issues.

Server and cooling fan failures

As fans age, or fail, the airflow over the IT equipment will lessen. This leads to higher temperature differentials between the front and rear.

Insufficient pressure differential to pull air through the cabinet

When there is an insufficient pressure differential between the front and rear of the cabinet, airflow will be less. The less cold air flowing through the cabinet, the higher the temperature differential front to rear will become.

Power Usage Effectiveness (PUE)

When the data is combined with the power consumption from the in-line power meter you can safely make adjustments in the data center cooling systems, without compromising your equipment, while instantly seeing the changes in your PUE numbers.

Air Handling Unit Monitoring

In chilled water cooling systems having sufficient water in the cooling tower is essential. With wireless tank depth pressure sensors, you can easily monitor and be alerted if the water level drops below the required levels.

Flow meters can be installed to check for water loss, ensuring inflow and outflow are equal.

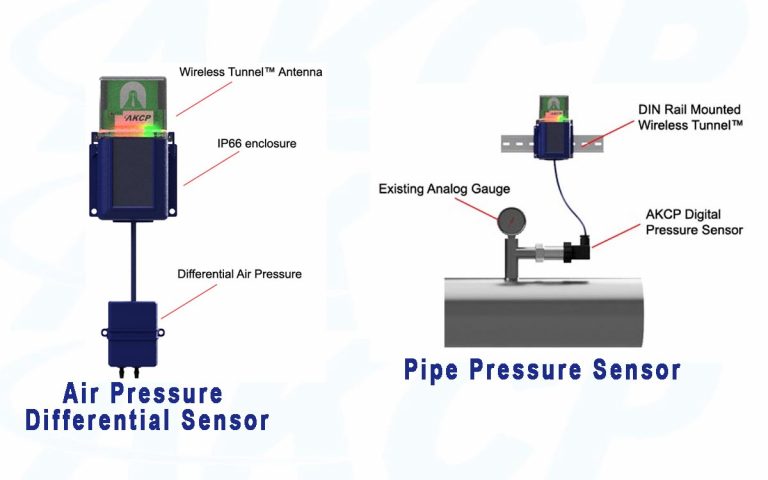

Differential Air Pressure Sensor installed on the Air Handling Unit Filter. When pressure drop across the filter is high filters are dirty and require maintenance. Sensors are wireless with 10-year battery life.

Wireless Pipe Pressure Sensors monitor the water or gas pressure and temperature on the input and discharge lines from the AHU.

Reference Links:

https://heinenhopman.com/en/about-us/blogs/five-things-you-need-to-know-about-chilled-water-systems/

https://www.sunbirddcim.com/blog/how-manage-high-density-data-centers

What is IDCM: Integrated Data Center Management?

https://www.datacenterdynamics.com/en/analysis/what-lights-out-data-center/

- About the Author

- Latest Posts

For over two decades at AKCP, I have been focused on a single mission: bringing complete visibility, security, and efficiency to the world’s critical infrastructure.

I believe that in the modern data center, AI is only as good as the data it receives. My goal is to ensure facilities have the precise sensor facts needed to control AI opinions, ultimately reducing PUE, releasing stranded capacity, and ensuring maximum uptime.